<span style="color: rgba(0, 0, 0, 0.85); font-family: Roboto, sans-serif; font-size: 18px; background-color: #fafafa;">DigiAssess is a digital assessment platform that enables institutions to design, conduct, evaluate, and improve assessments, including examinations, results, learning outcomes, accreditation, and quality assurance.</span>

Assessment in higher education has become a critical function, yet it remains one of the least evolved areas in institutional digital transformation. While Learning Management Systems have successfully digitized content delivery and student engagement, assessment continues to be handled through limited LMS capabilities or fragmented workflows.

This creates a structural gap.

Assessment is not just about conducting exams. It is about validating learning outcomes, ensuring academic integrity, and meeting accreditation requirements. When these responsibilities are managed within systems not designed for evaluation, inconsistencies and risks begin to emerge.

The issue becomes more visible in high stakes environments, where institutions must ensure fairness, standardization, and online exam integrity at scale.

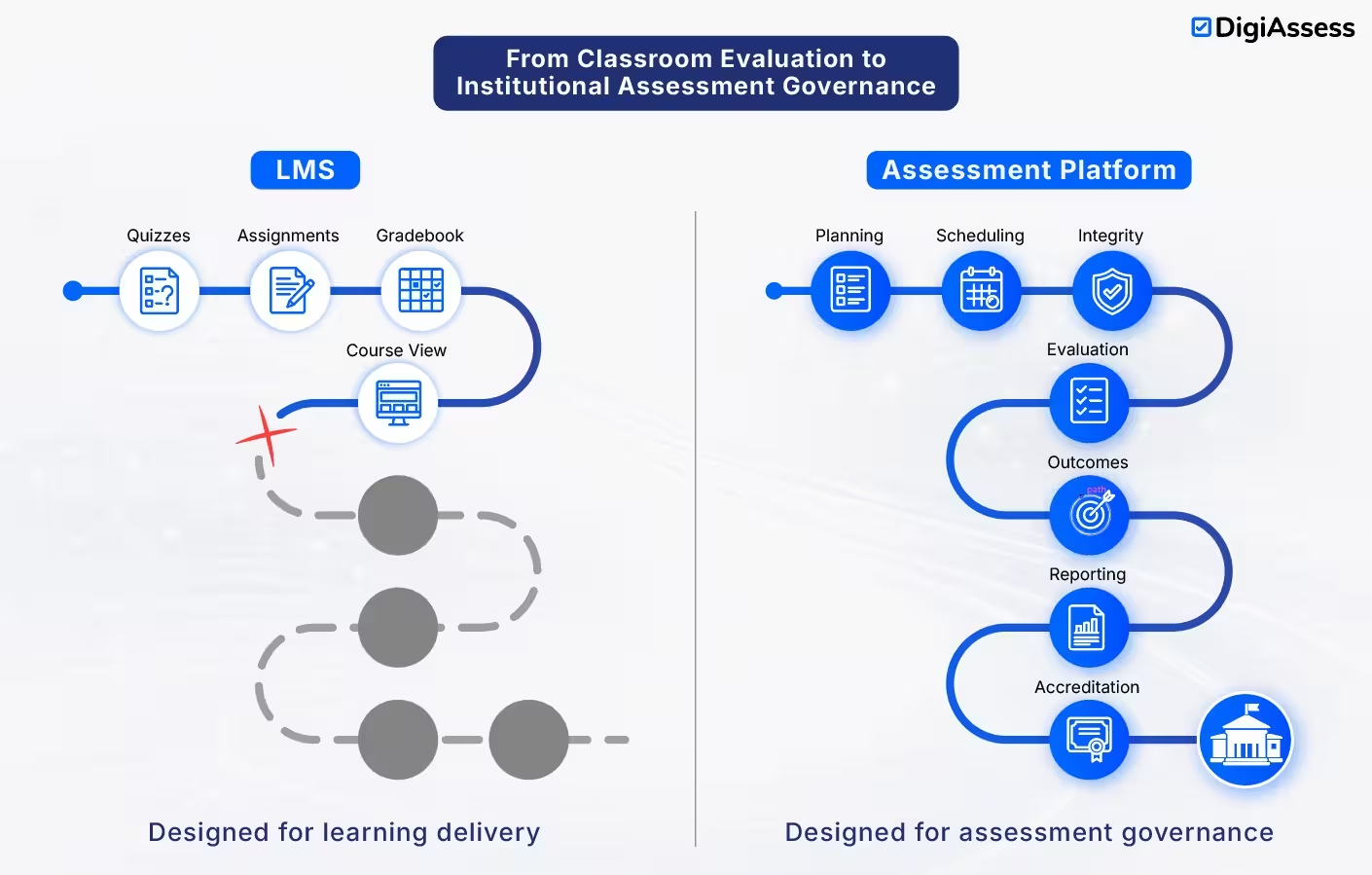

The core problem is not technological. It is architectural. LMS platforms are built for learning, not for functioning as a high stakes assessment system.

As expectations around accountability and outcomes increase, institutions are recognizing the need for a dedicated assessment layer that operates independently while integrating seamlessly within the academic ecosystem.

Learning Management Systems have become foundational to digital transformation in universities, enabling institutions to manage content delivery, student engagement, and course administration at scale. Their adoption has been rapid and, in many cases, institution-wide.

However, this widespread reliance has led many institutions to extend LMS platforms into areas they were not designed for, particularly assessment. While LMS tools can support quizzes and basic evaluations, they fall short when used as a full exam management system.

The limitations of LMS in assessment are structural, not functional. These platforms are built to facilitate learning, not to validate outcomes at scale. As assessment complexity increases, institutions begin to face critical gaps:

These are not edge cases. They represent baseline expectations in modern academic environments.

This creates a growing gap between academic expectations and system capabilities. What starts as a convenient extension quickly becomes an operational constraint.

The issue is not LMS adoption, but overextension. As assessment stakes rise, institutions require systems purpose-built for reliability, integrity, and governance.

High stakes assessments operate under a very different set of expectations compared to routine academic evaluations. These assessments influence progression decisions, certifications, licensing outcomes, and institutional credibility. As a result, they require a level of control, standardization, and traceability that goes far beyond what traditional LMS platforms are designed to support.

When institutions rely solely on LMS platforms as a high stakes assessment system, the gaps are no longer operational inconveniences. They become structural risks.

Ensuring online exam integrity requires more than timed quizzes and restricted access. It demands continuous identity verification, environment monitoring, and behavioral analysis. Most LMS platforms lack built in capabilities for advanced online proctoring systems, making it difficult to detect malpractice, impersonation, or collusion at scale.

As assessment stakes increase, this becomes a credibility issue rather than a technical limitation.

Modern education models are increasingly outcome focused. Institutions are expected to demonstrate how assessments map to competencies, learning objectives, and program level outcomes.

LMS based assessments are typically linear and isolated. They do not support structured blueprinting or systematic question bank management aligned to outcomes. This leads to inconsistent evaluation standards across courses and departments.

Most LMS platforms provide basic scoring and completion data. While useful for tracking participation, this level of reporting is insufficient for institutional decision making.

A robust digital assessment platform must provide deep exam analytics, including:

Without these, institutions lack visibility into the effectiveness of their evaluation processes.

Accreditation bodies and regulatory frameworks require detailed documentation of assessment processes. This includes how assessments are designed, delivered, evaluated, and reviewed.

LMS platforms are not built to function as an accreditation reporting system. As a result, institutions often rely on manual processes to compile evidence, increasing both workload and the risk of inconsistencies.

As institutions grow, so does the complexity of managing assessments across multiple programs, campuses, and cohorts. LMS based systems struggle to maintain consistency at scale, especially when different faculty members adopt varied approaches to evaluation.

This leads to fragmentation in assessment quality and governance.

An LMS can support learning workflows effectively. But it cannot independently function as a reliable system for high stakes assessment. The requirements are simply too specialized, and the risks too significant to ignore.

As institutions begin to recognize the limitations of LMS driven evaluation, the next logical question emerges.

If the LMS is not designed for assessment, then what is?

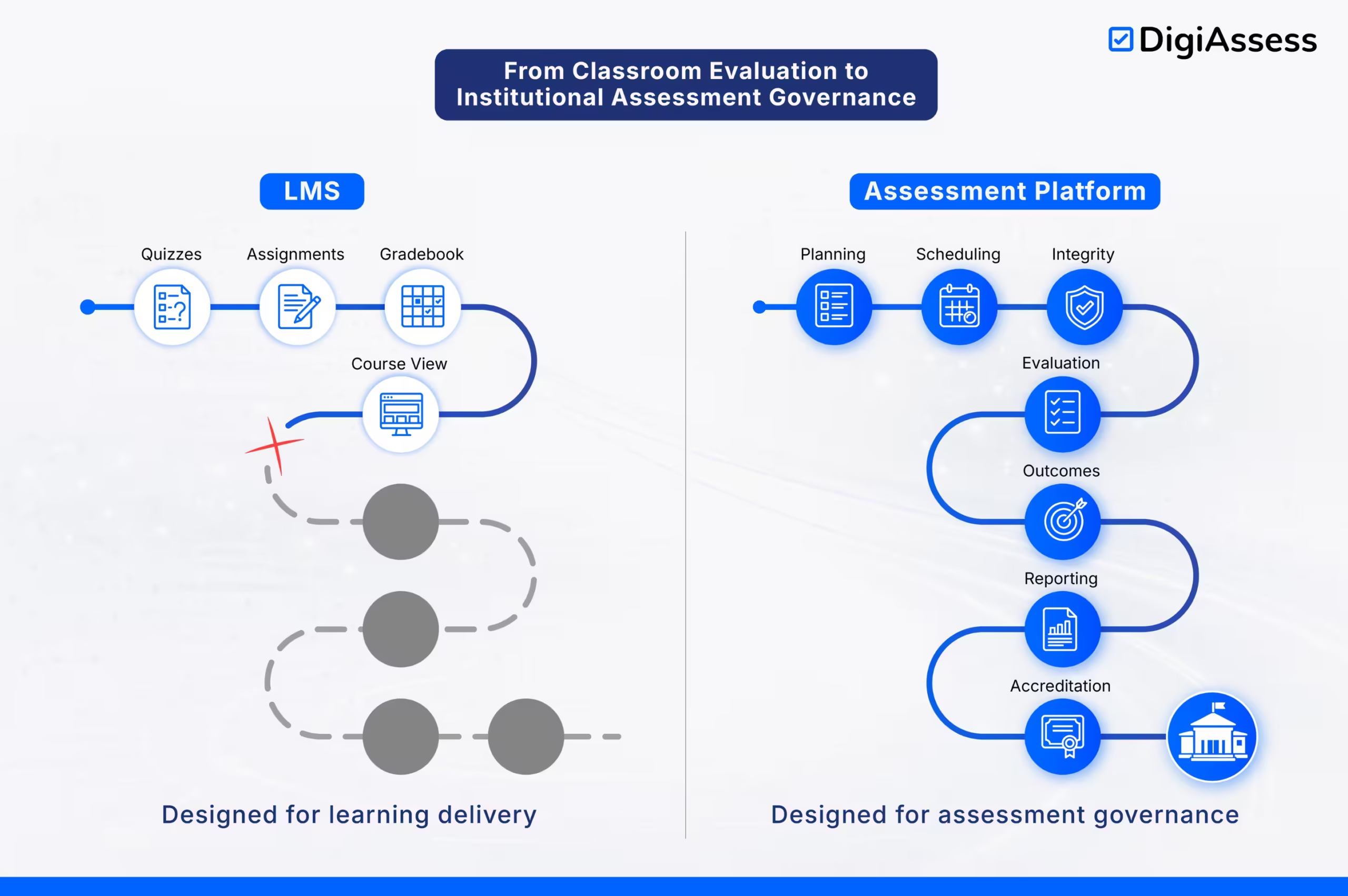

The answer lies in understanding that learning and assessment are two distinct academic functions. While they are closely related, they operate under different constraints and require different system architectures. This is where the distinction between an LMS and a digital assessment platform becomes important.

A direct comparison helps clarify this difference.

Capability | Learning Management System (LMS) | Dedicated Assessment System |

Primary Purpose | Learning delivery and course management | Outcome validation and evaluation governance |

System Orientation | Content-centric | Assessment-centric |

Exam Integrity | Basic controls (timers, navigation limits) | Advanced controls with AI-based online proctoring systems, identity verification, and monitoring |

Assessment Type | Suitable for low stakes assessments | Designed for high stakes assessment systems |

Question Design | Simple quizzes and assignments | Structured question bank management with blueprint-driven assessments |

Outcome Alignment | Limited support | Full outcome based assessment mapping to competencies and learning objectives |

Analytics Depth | Basic scores and completion tracking | Advanced exam analytics with question-level insights and cohort benchmarking |

Reporting Capability | Limited and manual | Built-in accreditation reporting system with audit-ready outputs |

Scalability | Moderate, varies by usage | Designed for large-scale, multi-cohort secure online exams |

Standardization | Depends on faculty usage | Centralized governance ensures consistency across institution |

Compliance Readiness | Weak | Strong, structured, and traceable |

Integration Role | Works alongside ERP systems | Integrates with ERP and LMS as a dedicated assessment layer |

Both are essential. But they are not interchangeable.

As institutions move beyond LMS centric evaluation, the focus shifts from tools to architecture. The question is no longer whether assessments can be conducted digitally, but whether they can be conducted with the level of control, consistency, and insight that modern academic environments demand.

A true digital assessment platform is not defined by isolated features. It is defined by how well it supports the entire assessment lifecycle, from design to delivery to reporting.

At the foundation is the ability to conduct secure online exams across diverse environments. Institutions must support multiple modes of delivery, including remote, on campus, and controlled offline scenarios. Scalability is critical, especially when managing large cohorts across programs and geographies.

A reliable exam management system ensures that assessments can be executed without disruption, regardless of scale.

Maintaining online exam integrity requires continuous monitoring and verification. Modern systems integrate AI assisted online proctoring systems that track candidate behavior, detect anomalies, and support live or recorded invigilation.

This shifts integrity from a reactive process to a proactive one.

Assessment quality depends heavily on how questions are designed and organized. A robust system enables centralized question bank management, where questions can be categorized by:

More importantly, it supports blueprint driven assessments, ensuring that every exam aligns with defined outcomes and competencies.

Institutions are increasingly measured on outcomes, not just completion. A modern platform must support outcome based assessment, linking performance directly to learning objectives.

This is supported by deep exam analytics, including:

These insights enable continuous academic improvement.

Regulatory and accreditation requirements demand structured and consistent documentation. A dedicated system functions as an accreditation reporting system, generating audit ready reports that capture the full assessment lifecycle.

This reduces manual effort while improving compliance reliability.

If you’re evaluating how to move beyond LMS-based assessment, a detailed breakdown can help clarify the gaps and next steps.

As assessment complexity increases, institutions that continue to rely solely on LMS driven workflows begin to encounter risks that are not immediately visible, but highly consequential over time.

These risks are not limited to operational inefficiencies. They directly impact academic credibility, regulatory standing, and institutional reputation.

Without robust systems to enforce online exam integrity, institutions expose themselves to malpractice risks that are difficult to detect and even harder to defend. Basic controls are no longer sufficient in high stakes environments where assessments influence progression and certification.

Over time, this creates doubt around the validity of outcomes.

Accreditation bodies expect institutions to demonstrate structured and consistent assessment practices. Without a dedicated accreditation reporting system, evidence generation becomes fragmented and manual.

This increases the likelihood of:

In regulated environments, these gaps can have serious consequences.

When assessment processes are not centralized, different departments and faculty members adopt varying approaches. This leads to inconsistency in difficulty levels, evaluation criteria, and grading standards.

Without a system that enforces outcome based assessment, institutions struggle to maintain fairness and comparability across cohorts.

In the absence of a structured exam management system, faculty and administrators compensate through manual processes. This includes managing question banks, compiling reports, and coordinating evaluations.

The result is reduced efficiency, higher error rates, and limited scalability.

Without advanced exam analytics, institutions operate with limited insight into how assessments perform. There is no clear understanding of:

This restricts the ability to improve academic quality in a systematic way.

These risks do not appear suddenly. They accumulate over time and become deeply embedded in institutional processes.

Addressing them requires more than incremental fixes. It requires a structural shift toward a dedicated assessment layer that can support integrity, consistency, and scalability at every level.

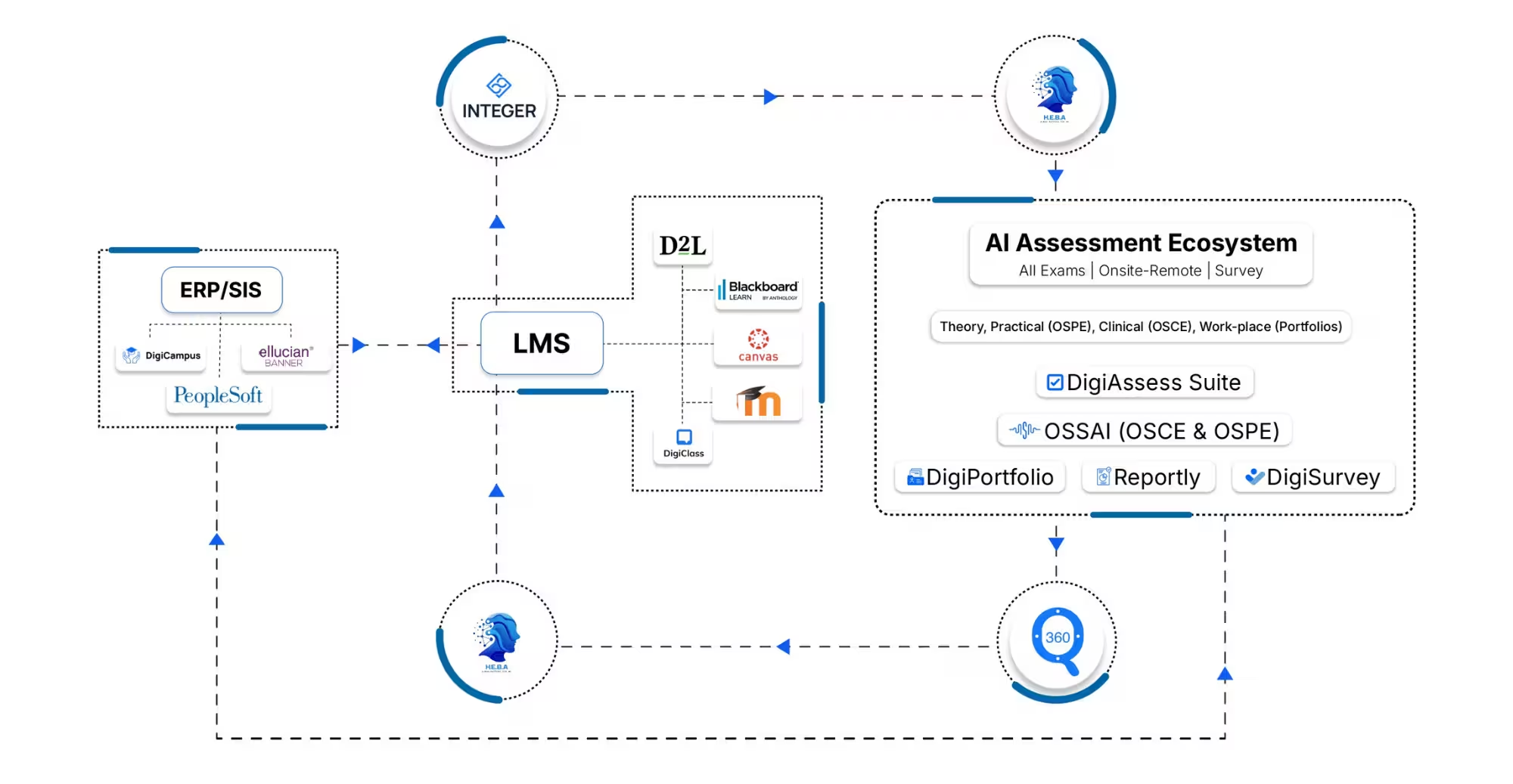

As institutions scale their digital capabilities, a clear pattern is emerging. High performing academic environments are no longer built around a single system. They are structured as integrated ecosystems, where each layer serves a distinct and specialized function.

This shift is particularly evident in how institutions are rethinking assessment in higher education.

Instead of forcing the LMS to handle all academic workflows, institutions are adopting a layered model that separates responsibilities across three core systems.

Enterprise Resource Planning systems manage institutional data. This includes student records, enrollments, grades, and administrative workflows. The ERP acts as the central repository for structured data, ensuring consistency and traceability across the organization.

However, it does not manage how learning happens or how assessments are conducted.

The LMS continues to play a critical role in delivering education. It manages:

It is optimized for interaction and delivery. But as discussed earlier, it is not designed to function as a high stakes assessment system.

This is where the transformation becomes significant.

A dedicated digital assessment platform functions as the validation layer within the ecosystem. It is responsible for:

More importantly, it ensures that every assessment is:

When these systems are integrated effectively, institutions achieve ERP LMS integration that is both flexible and scalable.

This separation of concerns reduces system overload, improves governance, and ensures that each function is handled by a system designed specifically for it.

The result is not just operational efficiency. It is a structurally sound academic ecosystem where assessment is treated as a core institutional capability rather than an extension of learning tools.

LMS tools are great for teaching, but they often fall short during high-stakes evaluation. DigiAssess is a dedicated digital assessment platform designed to handle the complexity, security, and scale that modern institutions demand.

Stop treating assessments as an LMS add-on. Build a foundation of academic integrity with DigiAssess.

Fill out the form to see how DigiAssess transforms academic operations

Adopting a digital assessment platform is not simply a technology upgrade. It is a structural decision that affects academic workflows, governance models, and compliance readiness. Institutions that approach this as a feature comparison exercise often overlook critical long term implications.

A more effective approach is to evaluate assessment systems based on alignment with institutional goals and operational realities.

A dedicated assessment layer must integrate seamlessly with existing ERP and LMS platforms. Strong ERP LMS integration ensures that data flows consistently across systems without duplication or manual intervention.

Institutions should assess:

Assessment systems must support large scale deployments without compromising security. This is especially important for secure online exams conducted across distributed environments.

Key considerations include:

Even the most advanced system will fail if it is not adopted effectively by faculty. Ease of use, intuitive workflows, and minimal training requirements are critical.

A well designed exam management system should reduce administrative effort, not increase it.

Institutions should evaluate whether the platform provides meaningful exam analytics and supports outcome based assessment.

This includes:

These capabilities are essential for continuous academic improvement.

Every institution operates within a unique regulatory and academic framework. The assessment platform must support customization while also functioning as a reliable accreditation reporting system.

This ensures that institutions can adapt to evolving requirements without restructuring their entire assessment process.

A structured evaluation across these dimensions allows institutions to move beyond short term fixes and invest in a system that supports long term academic and operational excellence.

The role of assessment in higher education has fundamentally changed. What was once treated as a supporting function is now central to how institutions validate outcomes, ensure accountability, and maintain credibility.

LMS driven approaches may support basic evaluation needs, but they are not built for the demands of high stakes assessment systems or ensuring consistent online exam integrity. As expectations around quality and compliance increase, this gap becomes difficult to ignore.

Institutions are now shifting toward a more structured model by adopting a dedicated digital assessment platform within their ecosystem. This is not just about improving efficiency. It is about establishing control, standardization, and scalability in how assessments are designed and delivered.

Assessment is no longer an extension of learning systems. It is a core layer that defines how institutions measure and defend academic outcomes.

Book a Demo with DigiAssess and explore how a dedicated assessment platform can transform your academic operations.

Fill out the form to see how DigiAssess transforms academic operations

+971 55 654 0099

+971 55 654 0099©2026. All rights reserved by DigiAssess.